As we move more towards the cloud for our solutions these days, there are a growing number of PAAS options appearing that can help us to solve our problems without needing to reinvent the wheel.

If your application requires a search service to be able to quickly query over a proprietary or legacy data source, I can highly recommend the use of Azure Search. Azure Search offers many features, including fast setup, data source flexibility, great REST API and extensive monitoring and control via the Azure Portal. You can read more about Azure Search here.

This blog post, however, is about how to cater for possible issues when deploying an Azure Search and its corresponding indexes via a CI/CD DevOps pipeline.

The best way to deploy the search service itself is via ARM templates. The ARM template just sets up the search service, it can’t be used to populate the indexes. Below is a sample template which is all you need to get started.

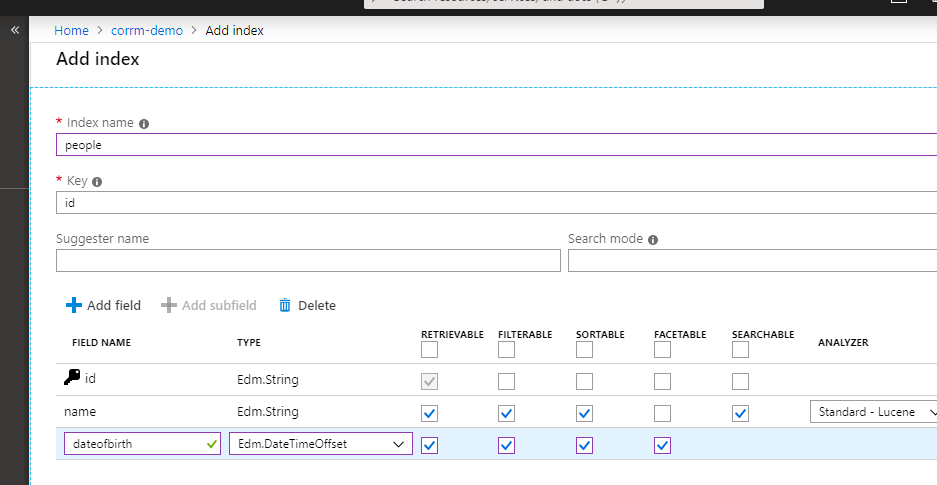

To create your search indexes, you can either use the Azure Portal or you can create/update JSON files. These files can be sent as updates to the Search Service via the REST API or an SDK as part of an automated deployment pipeline.

The Azure Portal is a good way to get started. You can design your index and ensure all the correct properties are there.

In this modern era, it is preferable to have the indexes either created or exported from the web portal as JSON files. These files should be part of a git repository which is linked to build and deploy pipelines. When it gets to the deployment pipeline, the files will be picked up and applied to the search service.

With automated deployments, pushing any index changes out to the various environments a painless process. Also storing the JSON files in a git repository will maintain change histories and allow for concurrent development of the index definitions.

A standard Azure Search Service allows for up to 50 indexes. If your application is searching through large amounts of data, it may need all 50. However if you try to create up to 50 indexes at once as part of a deployment pipeline, you will end up with an error like:

Error with creating index people.json. The remote server returned an error: (503) Server Unavailable. Detailed error: You are sending too many requests. Please try again later.

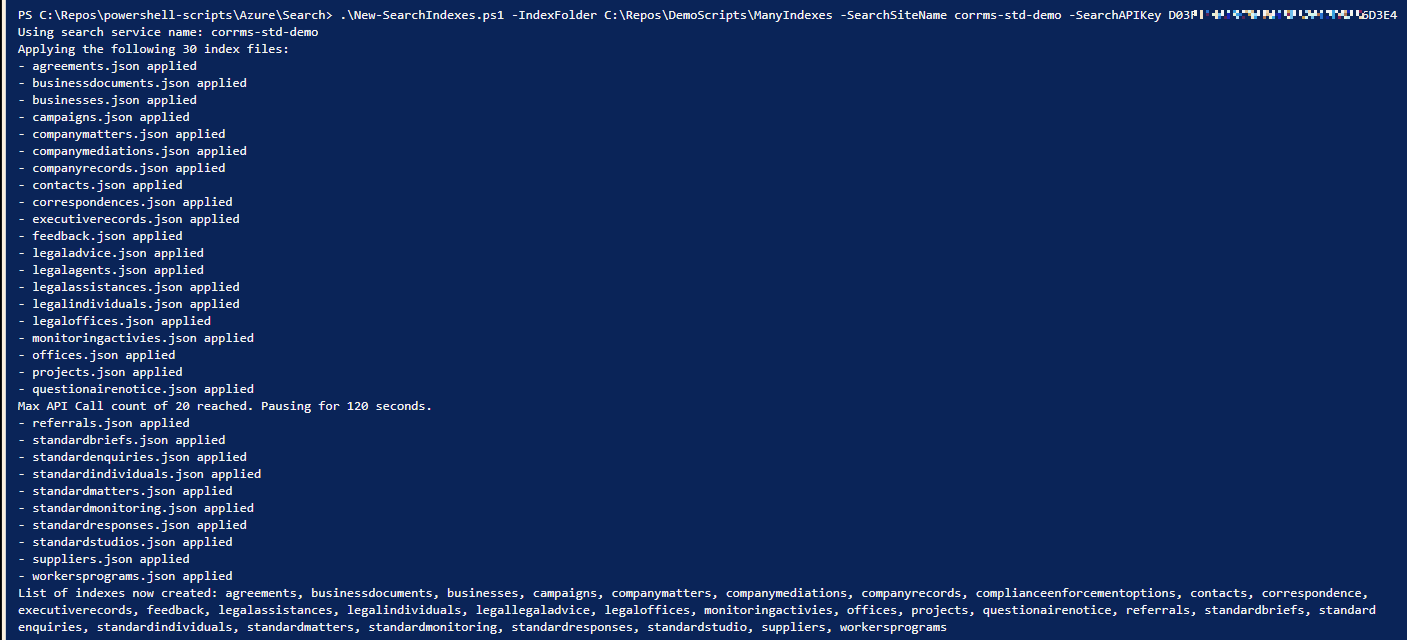

Unfortunately, Azure Search only lets you create about 25 indexes in a 2-minute window. This means that to reach the allowed 50, you have to make 25 indexes, wait 2 minutes and then create another 25.

To bypass this issue, your deployment script will need to take into account these restrictions and handle a delay with the process. I have created a PowerShell script that is defined here that does exactly that.

If you use the script or some variation of it, in your deployment pipeline, it will pause after deploying 20 indexes and resume again 2 minutes later. This will repeat until all indexes have been deployed.

So why is this limitation there in the first place? I have asked Microsoft Support and (as of the date this blog was published) there has been no reasonable response.

I assume that they expected users to only use the Azure Portal for manually creating indexes, as opposed to an automated process. This is a bit disappointing as a standard search service can have up to 50 indexes at once. Having to wait up to about 6 minutes just to deploy indexes is not ideal.

Hopefully, this will be fixed soon, but until that happens the solution detailed here is ready for people to use if required.