Overview

Apache Kafka is being leveraged very commonly and forms some of large scale and important systems in the world processing trillions of messages per day. It is serving as pipeline backbone for many companies in financial and tech industry.

Before I continue, I want to set some expectations. The point of this article is not to explain the intricacies or use cases of Kafka and its architecture but rather to clearly illustrate one of the libraries that can be used to perform Kafka Testing and our approach and experience. And how Zerocode allowed us to perform Integration, Unit and End to End (E2E) testing. The article is intended for those already acquainted with Kafka, its applications or very least have a strong level of theoretical knowledge about it. To follow our setup, you would like to require to clone https://github.com/authorjapps/zerocode and have some monitoring tool (Confluent Control Centre) installed.

Use case

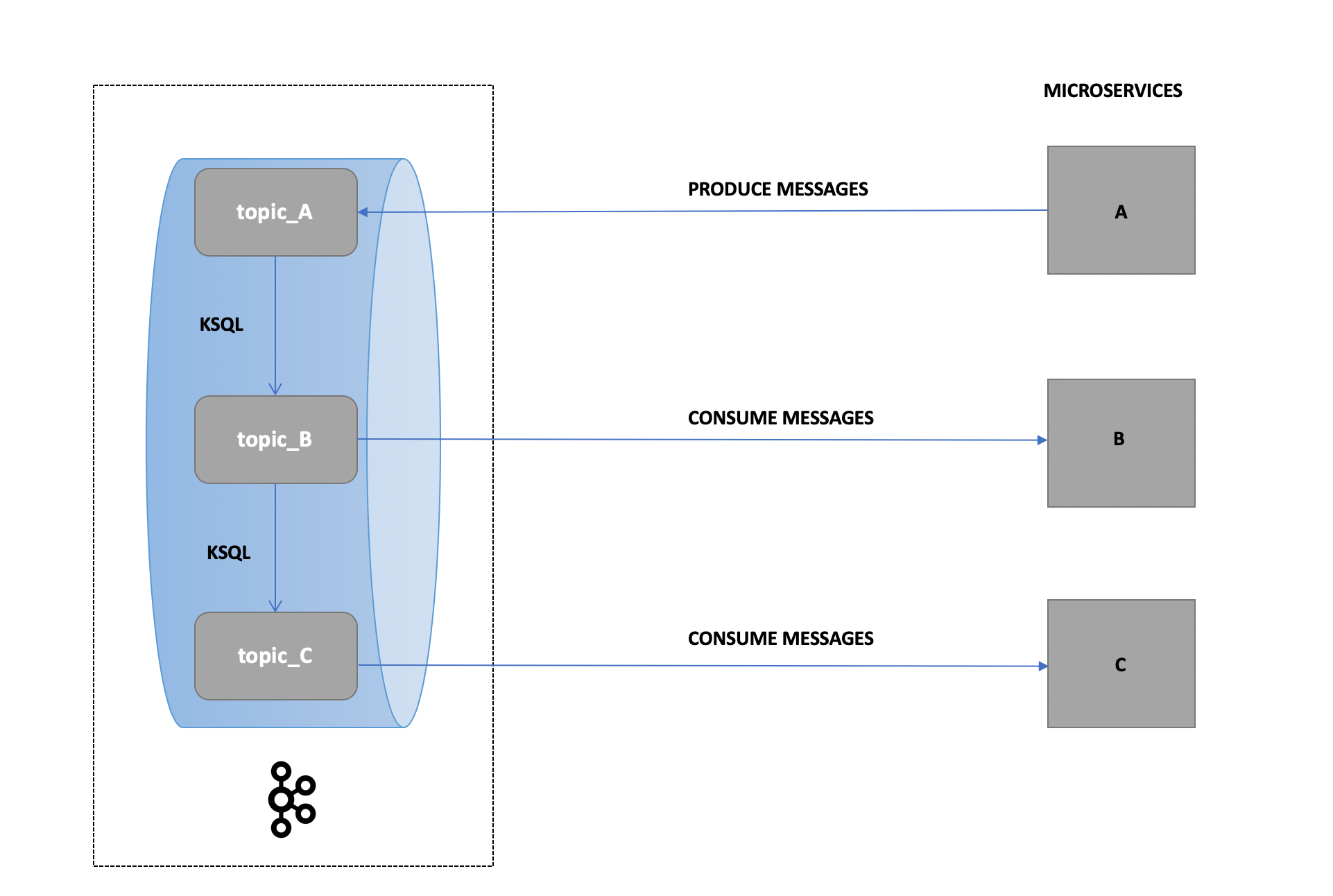

Because of the project confidentiality and our commitment to client, I would dress up our use case with a generic scenario that you might face while testing Kafka. Please feel free to reach out to discuss your specific scenario via comments or LinkedIn. The project had 30+ microservices producing and consuming messages to Kafka and performing certain transformations and validations on the messages in the process.

Our Approach

We decided to use Zerocode because of it its step-chaining, simple and horizontally scalable format which permitted us to write tests in simple JSON formats with payload and response assertions (leveraging using JSON Path: https://github.com/json-path/JsonPath/blob/master/README.md#path-examples)

Confluent Centre (local) was our platform of choice to obtain visibility and monitoring of our test cases.

To simulate one of our test scenario (i.e. where the message might be produced from microservice A then consumed and required to be consumed and validated from microservice B) we produced message to topic_A and leveraged KSQL to write those messages to topic_B and consume from topic_B and perform the assertions then execute another KSQL query to pass that payload on topic_C and repeat. Zerocode’s JSON declarative style allowed us to do this efficiently.

Challenge

As a common knowledge, we are aware that messages in Kafka are not ordered!

ZEROCODE’S SUGGESTION:

In our discussion with Zerocode community, this can be resolved with the option to define:

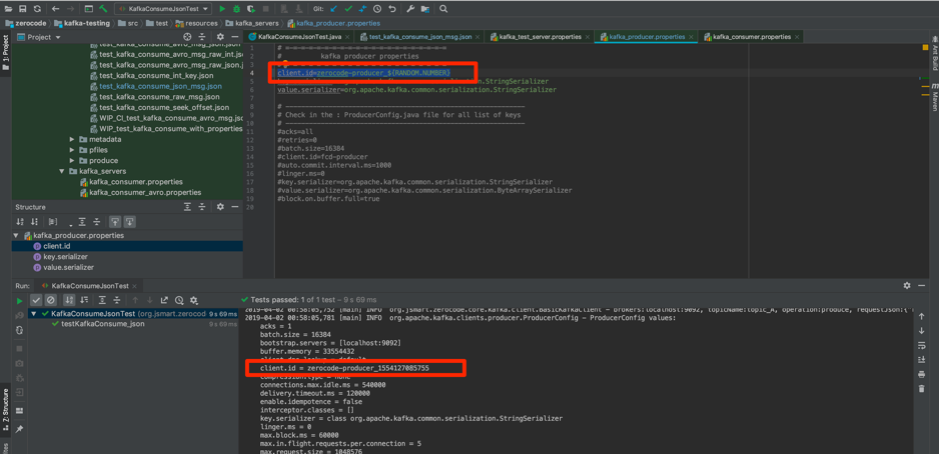

- client.id in: /zerocode/kafka-testing/src/test/resources/kafka_servers/kafka_producer.properties

- group.id in: /zerocode/kafka-testing/src/test/resources/kafka_servers/kafka_consumer.properties

Note:

In Kafka; client.id: allows you to easily correlate requests on the broker with the client instance which made it. Check out more examples and details: https://docs.confluent.io/current/clients/producer.html

And, group.id: property defines a unique identity for the set of consumers within the same consumer group. You can learn more here: https://jaceklaskowski.gitbooks.io/apache-kafka/kafka-properties-client-id.html?q=group.id

EXAMPLE 1:

Zerocode allows you to configure e.g. client.id = zerocode-producer_${RANDOM.NUMBER} and with various other placeholders. Keeping it unique assist in tracing and testing purposes.

With the client.id defined as example above, each test executed is assigned a unique ID.

Sample results below:

- 1st run - client.id = test_producer_1553209530873

- 2nd run - client.id = test_producer_1553209530889

- 3rd run - client.id = test_producer_1553209530893

This suffixed numeric ID is unique, because it is the numeric equivalent of the current timestamp.

EXAMPLE 2:

Another suggested approach by the Zerocode community is to define client.id as timestamp as it makes ideal for testing and tracing

client.id = test_producer_${LOCAL.DATE.TODAY:yyyy-MM-dd}

e.g.

- 1st day - client.id = test_producer_2018-03-18

- 2nd day - client.id = test_producer_2018-03-19

- 3rd day - client.id = test_producer_2018-03-20

Please see the following link for additional placeholders to define client.id https://github.com/authorjapps/zerocode#localdate-and-localdatetime-format-example explained in the README file suited to your project requirement.

The group.id is defined in the kafka_consumer.properties as per Kafka’s requirement.

e.g. group.id=consumerGroup14 etc.

Defining it unique can help you in achieve your end to end testing right. Also, this might enable in rerunning your entire test Suite/Pack i.e. making your CI build pipeline repeatable.

This uniqueness will allow the consumers to fetch Old + New messages (if it helps).

More examples and details: https://docs.confluent.io/current/clients/consumer.html

Our Experience & Learning

Zerocode allowed us to achieve this with Java runner with a JSON config file with a Java runner (Junit) and configurable Kafka server, producers and consumers properties.

We used KSQL to move data from a topic to another to simulate multi microservices involvement as discussed above.

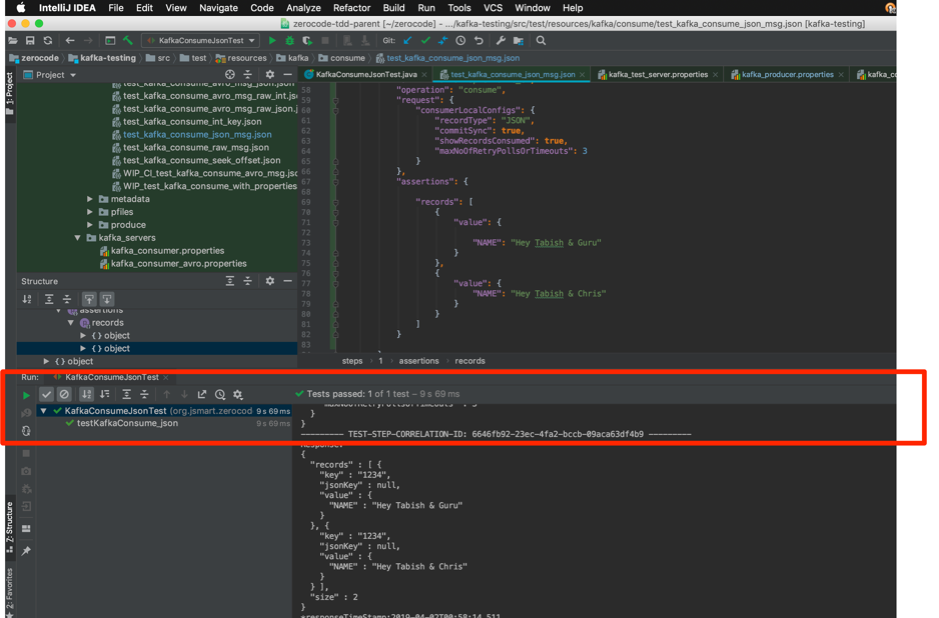

Some of the testing screenshots shared are below:

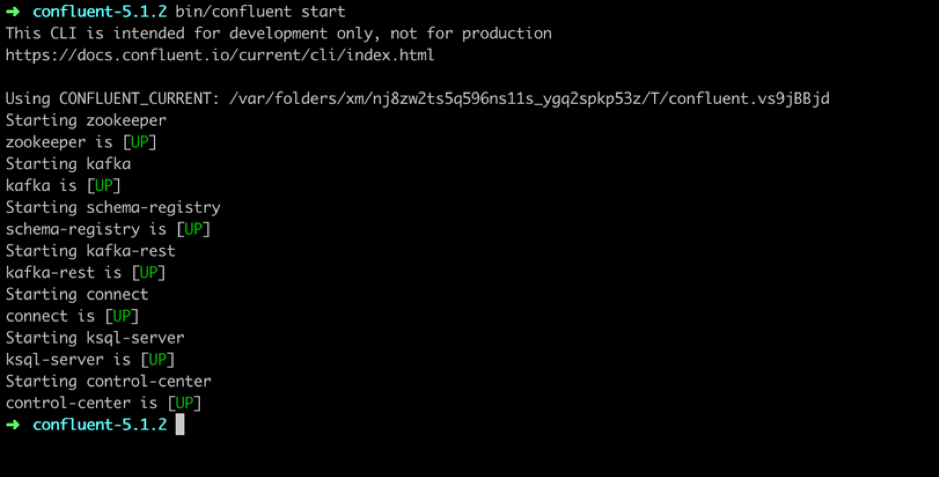

1. START CONFLUENT INSTANCE

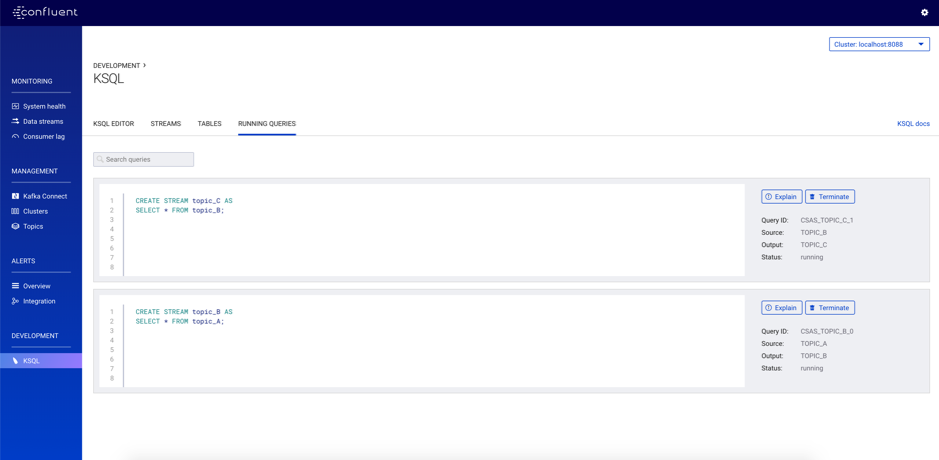

2. KSQL QUERIES

See the snapshot of mock KSQL queries we used to move data between different topics.

Please double-check following dependency is added in your repository:

<dependency>

<groupId>org.jsmart</groupId>

<artifactId>zerocode-tdd</artifactId>

<version>1.3.5</version>

</dependency>

Property configuration examples include:

- Producer Properties: https://github.com/authorjapps/hello-kafka-stream-testing/blob/master/src/test/resources/kafka_servers/kafka_producer_unique.properties

- Consumer Properties: https://github.com/authorjapps/hello-kafka-stream-testing/blob/master/src/test/resources/kafka_servers/kafka_consumer_unique.properties

Sample Test cases to run:

- KafkaProduceUniqueClientIdTest.java:https://github.com/authorjapps/hello-kafka-stream-testing/blob/master/src/test/java/org/jsmart/zerocode/integration/tests/kafka/produce/KafkaProduceUniqueClientIdTest.java

- KafkaConsumeUniqueGroupIdTest.java:https://github.com/authorjapps/hello-kafka-stream-testing/blob/master/src/test/java/org/jsmart/zerocode/integration/tests/kafka/consume/KafkaConsumeUniqueGroupIdTest.java

Below is a sample JSON configuration which we used in one of our test scenarios:

Test Results

Security

In terms of security Zerocode offers below:

- For Oauth2 please see a very short and precise blog in the DZone Security Zone: https://dzone.com/articles/oauth2-authentication-in-zerocode Please reach out to community or Zerocode in case you need further information.

- For Corporate Proxy configuration, you can follow the README section here: https://github.com/authorjapps/zerocode#soap-method-invocation-where-corporate-proxy-enabled

- This is for any Http API Invocation, for instance REST, SOAP etc

- SAML/JWT with working examples are: https://github.com/authorjapps/zerocode#using-any-properties-file-key-value-in-the-steps repo

- If tokens are dynamic, it's still easy to inject them into header in runtime: https://github.com/santhoshTpixler has explained in his blog

- If you use OpenAM or RedHat SSO or Simple Basic Auth. You can refer the examples in readme-file https://github.com/authorjapps/zerocode#http-basic-authentication-step-using-zerocode. You can manually use per test-case wise or embed it to the HttpClient which is one off (and less maintenance overhead

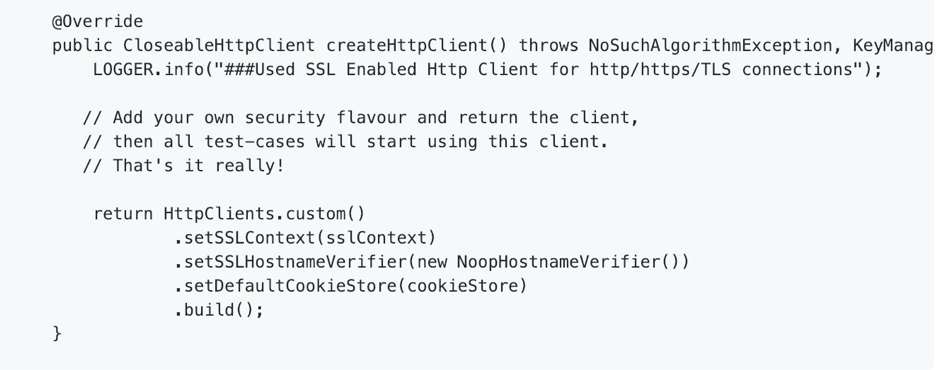

7. Custom HTTP client: Zerocode's Http Client supports Http and Https connections anyways. But you can override and add/remove security features to match your project requirement.

Then it's very simple and straight forward to use like below-

Just annotate your test class or suite class.

@UseHttpClient(CustomHttpClient.class)

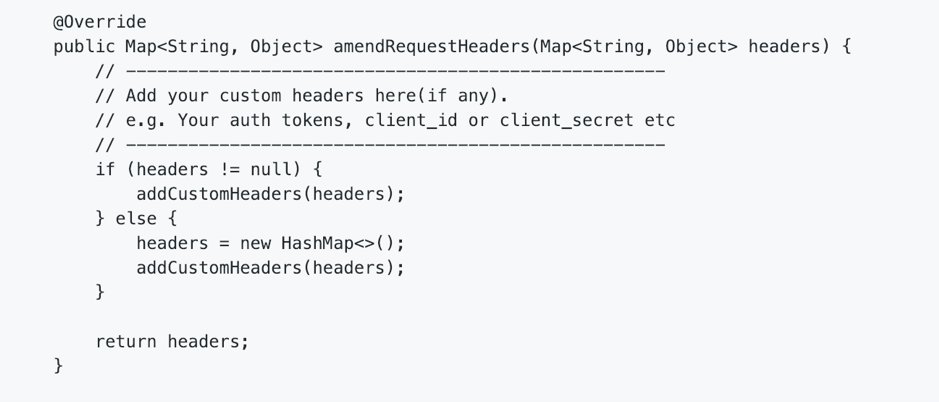

In similar fashion, you can inject any custom headers you need.

e.g.

Feature & Future

In discussion with the Zerocode broad contributing community, about on the feature-comparison front Zerocode are in the process of collecting the feedback/data from our customers to capture benefits and preference to Zerocode e.g.

- From Postman(collections) to Zerocode

- From other Step-Definition based BDD tools to Zerocode etc.

We and Zerocode will love to hear your feedback on this.

Wrapping Up

Distributed Testing is tricky and doesn’t have a silver bullet and is renowned for their unique corner cases. To counter them requires a lot of design thinking and set of practices of SDLC (Software Development Lifecycle) from process design to production. The goal of this blog is to share some insights on how to handle and scale testing as needed with an existing well thought out and document option Zerocode aimed at preserving quality.

It is important to clearly outline that Zerocode is exceptional for:

- Application Integration Testing

- End to End testing

- System Integration testing

- Load/Stress testing

- API Mock making (using wiremock JSON DSLs)

In a declarative way reducing the hassles to zero for Developers/Testers.

Our conclusion on choosing a suitable testing library or framework would be to:

- Ease of use

- Less syntax overhead

- Ease of dealing and asserting payload

- Easy to extend the test runners

- Ease of to add custom security featured

- Easy for manual testers to understand the test flow

We sincerely hope this helps out community to some extent and helps in bridging and filling the gap with our kindred spirits from Zerocode.

Honourable References

For further detailed information on how to test Kafka or REST APIs producing and consuming from or to Kafka please see the following link or get in touch via comments below: https://github.com/authorjapps/zerocode